Transform your venue into a calibrated 3D stage. ArUco markers map camera positions, depth estimation builds point clouds, and moving heads auto-calibrate their pan/tilt ranges — all from the orchestrator with any camera hardware.

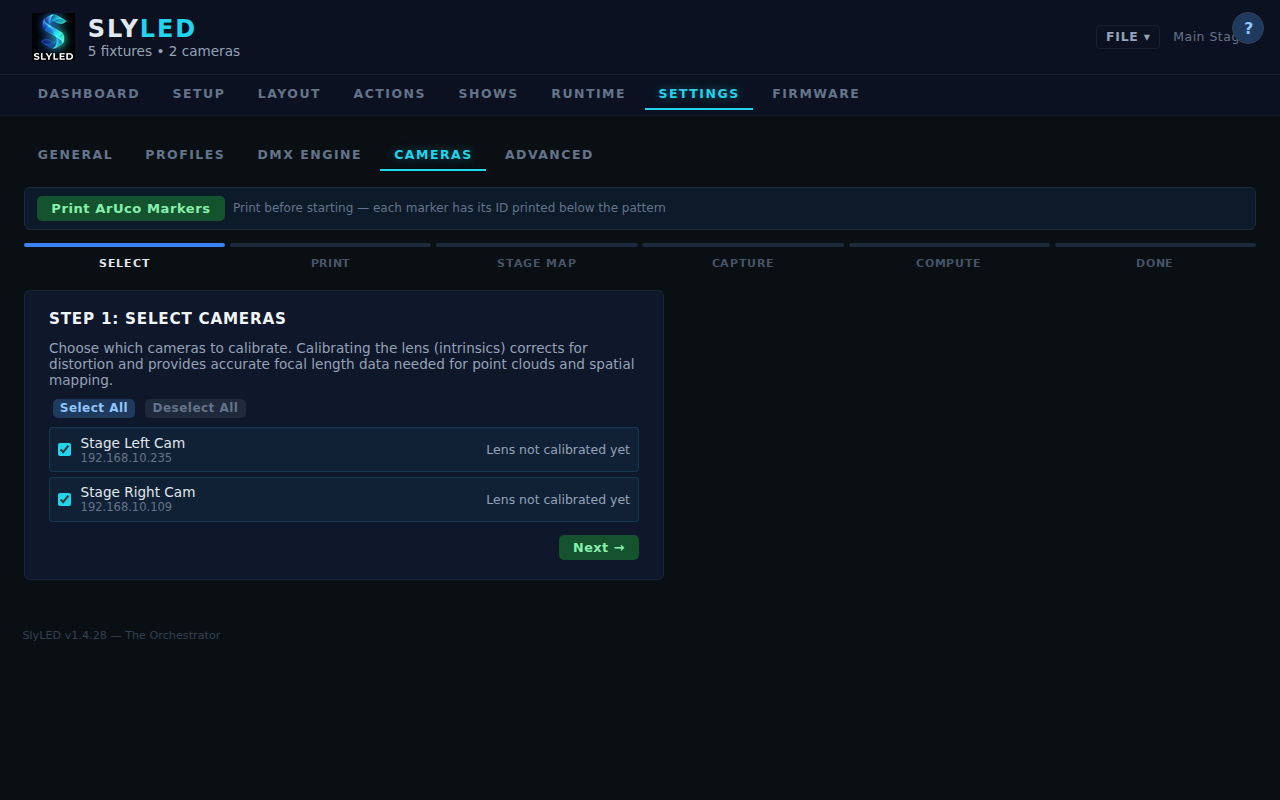

- ArUco marker detection runs on the orchestrator — any camera works

- Stage Map: solvePnP computes camera 3D pose from floor markers

- Depth-Anything-V2 monocular depth for environment point clouds

- RANSAC surface detection: floor, walls, and obstacles

- Adaptive settle time: 0.8-2.5s with double-capture verification

- Boundary-aware BFS: stops when beam leaves camera view

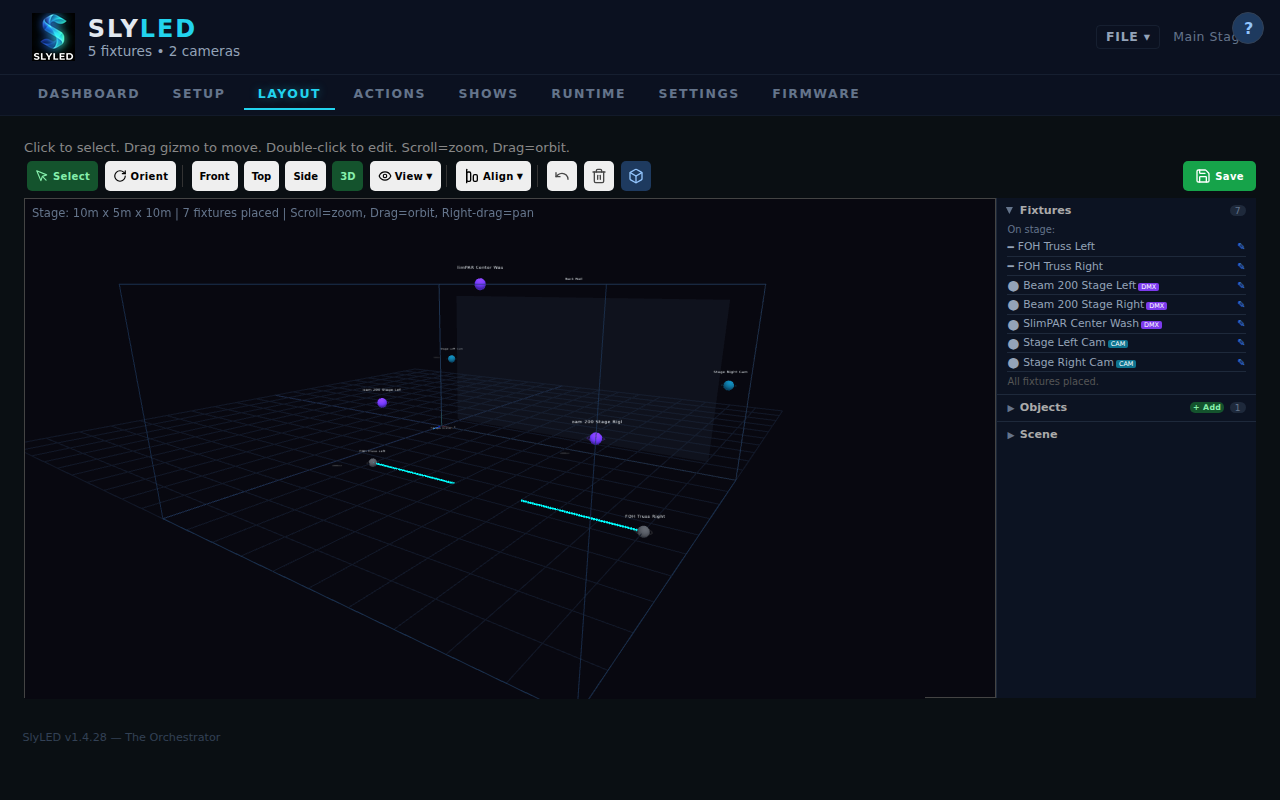

- Per-fixture light maps: (pan, tilt) → (x, y, z) in stage mm

- Stereo triangulation from 2+ cameras for object tracking

- Beam-as-structured-light refines 3D model during calibration

- Point cloud + calibration data included in .slyshow project files